Use any AI model you want

Cogitator works with models from OpenAI, Anthropic, Google, Groq, and others. Run a local model with Ollama if you want everything on your machine. You pick which model handles what, and you can change your mind anytime.

Cogitator works with models from OpenAI, Anthropic, Google, Groq, and others. Run a local model with Ollama if you want everything on your machine. You pick which model handles what, and you can change your mind anytime.

Most AI apps lock you into one provider. If prices change or a better model comes out, you're stuck. And running every single operation on the most expensive model wastes money on things that don't need it.

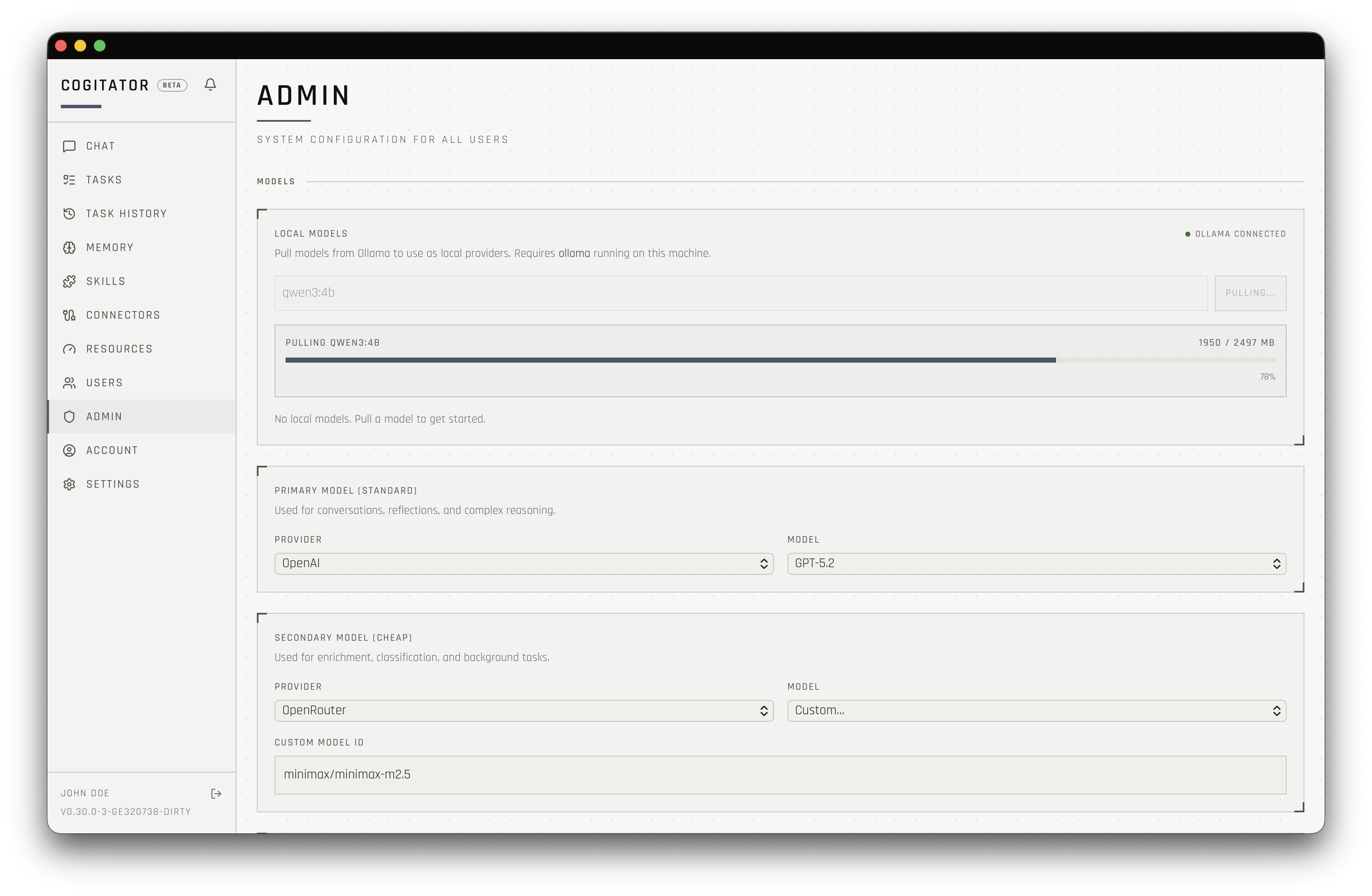

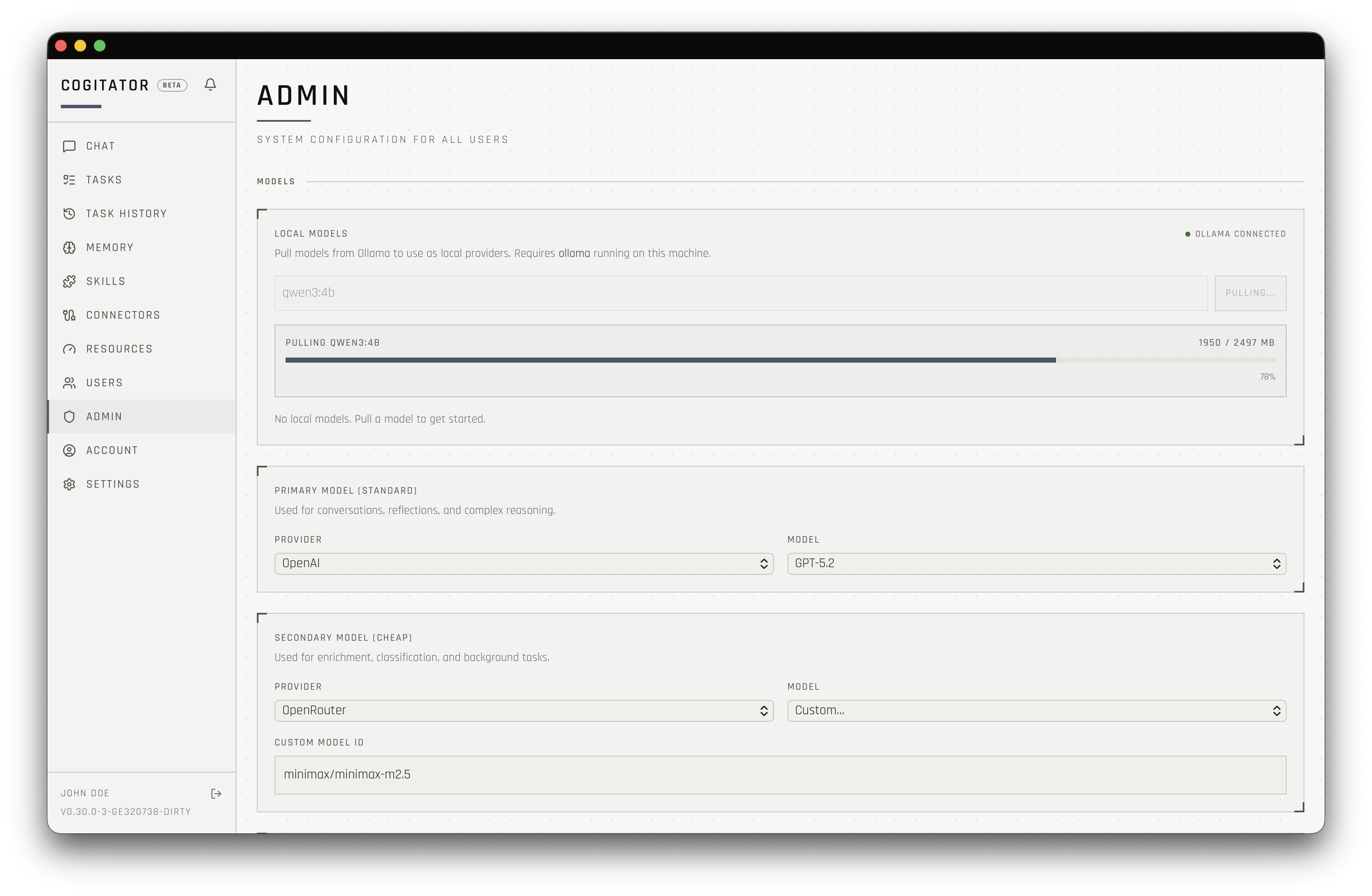

Connect one or more AI providers in settings. OpenAI, Anthropic, Google, Groq, Together, OpenRouter, or a local Ollama instance. Mix and match however you like.

Cogitator splits operations into two categories: complex work like conversations and reasoning, and routine work like organizing memories and running simple checks. You assign a model to each tier.

This split means 70-80% of operations run on inexpensive models. You get the same quality in conversations while spending far less on background processing. The expensive model focuses where it matters.

Use different companies for different tiers, or the same one for both. Connect as many providers as you want and swap between them freely.

Run Ollama for complete privacy. No data leaves your machine. Your conversations, your hardware, your rules.

See exactly what each model tier costs with daily charts. Know where your money goes and whether a cheaper model would do the job just as well.

Change models anytime from settings. No restart required. A new model dropped today? You can be using it in thirty seconds.